Get In-depth Biotech Coverage with Timmerman Report.

12

Jan

2026

Sovereign Risk: The Geopolitical Price of Outsourcing the Biotech Engine

John Cassidy, general partner, Kindred Capital

On the surface, delegates at this year’s JP Morgan Healthcare Conference have reason to be pleased. The Nasdaq Biotech Index was up 30% last year. After years of public market woes, it looks like there is light at the end of the tunnel.

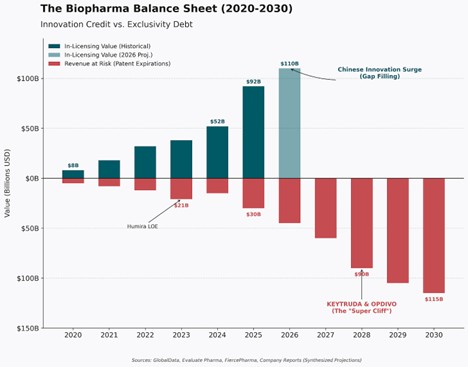

Some structural issues still exist – 10 years to get a molecule to clinic, regulators that need a shot in the arm, and (I’d argue) a negative enterprise value on most new programs. But Big Pharma isn’t stupid. Patent cliffs are looming, pipelines are thinning, and the market is hungry for new assets.

So the industry did the rational thing: it looked East.

The new playbook is simple: license clinical-stage assets from China and run them globally.

Reuters pegged the trend: in just the first half of 2025, U.S. drugmakers signed 14 China-licensing deals worth $18.3 billion, compared with just two deals the year before. Morgan Stanley framed it cleanly: China has gone from “generics factory to innovation engine.” And Western pharma wants in.

Some of these assets will be great. Patients will benefit. There will be headlines about “win-win” globalization.

But this licensing wave is also, in many cases, window dressing. It tells a story about where molecules are sold, not where they’re made. It celebrates downstream commercial rights while ignoring upstream capacity loss.

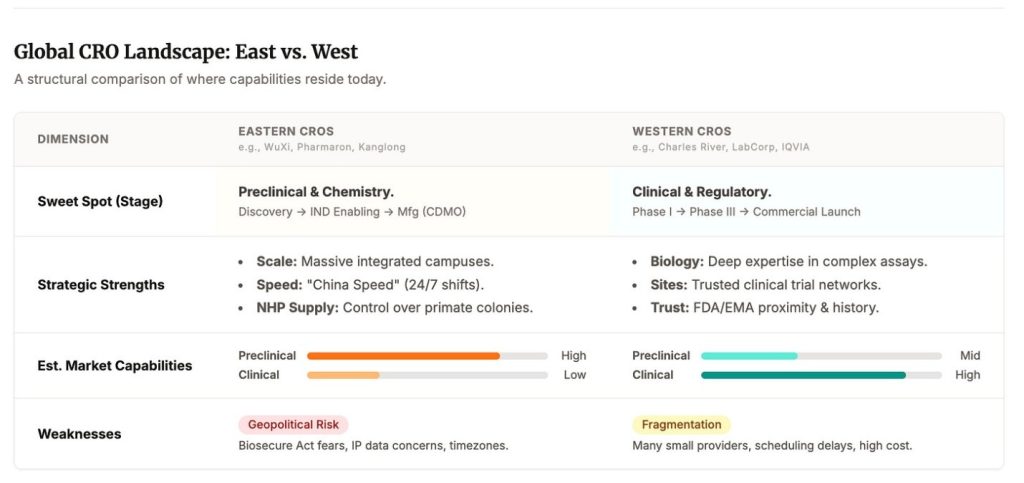

One nuance matters here. The West still runs the clinical machine. Late-stage trial execution, global site networks, data management, regulatory choreography, pharmacovigilance — this is still a Western strength. This piece is not arguing we forgot how to run Phase III. It’s arguing we made a quieter bet: that preclinical biology is a commodity you can safely rent.

We funded the factory layer

Clinical dominance can hide preclinical dependency. The clinical layer is visible, audited, and legible to boards. The preclinical layer is where the learning curve compounds, and where “process innovation” quietly becomes the innovation.

Western companies, in the name of asset-light models and operational efficiency, spent years transferring preclinical execution into Chinese platforms. Not just isolated tasks, but entire modalities. The result?

We now have Western companies licensing from ecosystems that Western capital helped train.

This wasn’t one grand conspiracy. It was a thousand “reasonable” decisions:

“We’re asset-light.”

“We’ll rent capacity.”

“We don’t need in-house biology.”

“This CRO is faster.”

“This is just execution; the IP stays with us.”

Apple in China

If this feels familiar, that’s because it is. We’ve seen this movie before.

In the 2000s, Apple built the world’s most iconic consumer hardware. But it built it in someone else’s factory. That choice, logical at the time, drove speed, scale, and margins.

It also seeded something harder to unwind: dependency. Operational know-how leaked. Local capability compounded. Today, reversing that reliance takes decades, not quarters.

Tesla walked the same path. In 2019, it became the first Western automaker to build a wholly owned factory in China. In 2025, it lost global EV leadership to BYD, a company born in part from the ecosystem Tesla helped fund.

Now biotech stands at the same crossroads.

The product isn’t phones. It’s the infrastructure that discovers, tests, and manufactures medicine.

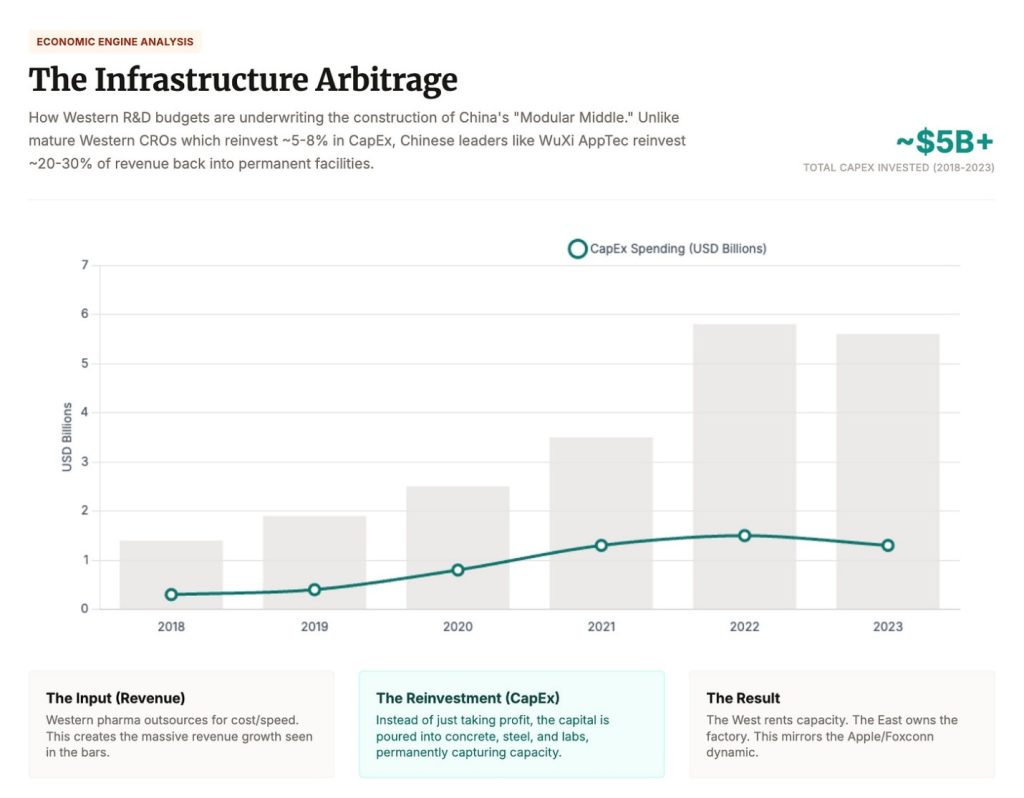

Take WuXi AppTec. In 2024, it synthesized over 460,000 new compounds. It booked RMB 25B ($3.6 billion USD) in revenue from U.S.-based customers, more than 60% of its total. It reinvests 23–25% of that revenue into expanding its own capacity. That’s self-funded CapEx at geopolitical scale.

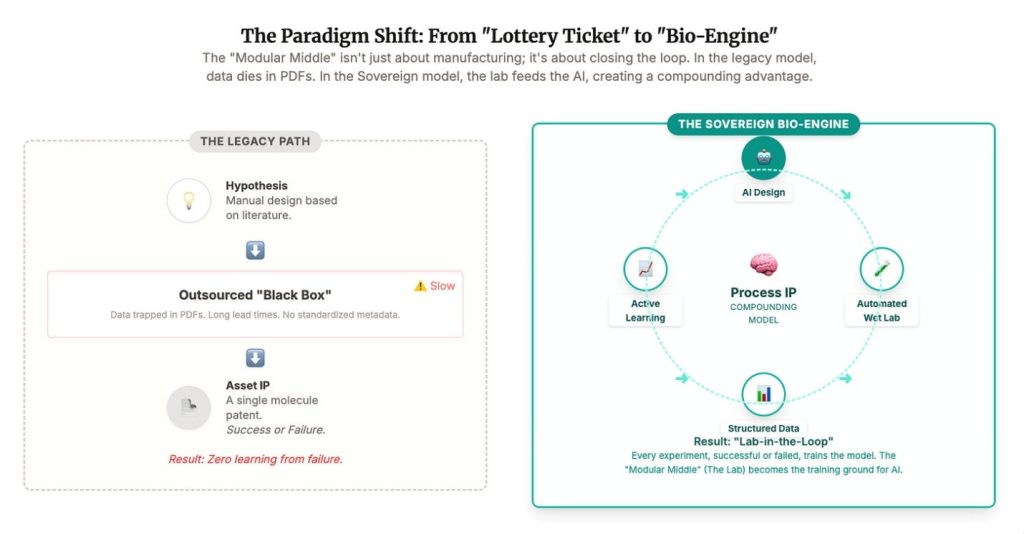

Now layer in AI. Drug discovery is becoming a loop: design → make → test → learn → redesign. The tighter that loop, the stronger the model. The better the wet lab integration, the better the output. Eli Lily has embraced this and become the first $1 trillion market cap pharma (granted this may be partly because of Novo’s missteps…).

When you outsource that loop, two things happen:

- First: your model breaks. Latency, batch effects, messy formats. AI runs on clean data. CRO execution introduces heterogeneity and misaligned incentives that breaks closed-loop learning. They poison the loop.

- Second: your IP leaks. A CRO that sees your inputs and your outputs has everything it needs. You’re not just outsourcing. You’re training your future competitor.

This is how virtual pharma becomes vulnerable pharma. And it’s how TechBio turns from a buzzword into a national priority.

Defensibility is not just what you can patent — it’s what you can repeatedly build, reliably execute, and directly distribute.

In AI x Bio, patents are just one layer. What matters now is what you can build, execute, and ship, over and over again.

Software already ran this experiment. Bill Gurley’s version is blunt: patents rarely decide outcomes. Elon’s move is even blunter: open source what others hoard, then win anyway. In practice, distribution plus iteration plus execution eats legal exclusivity for breakfast.

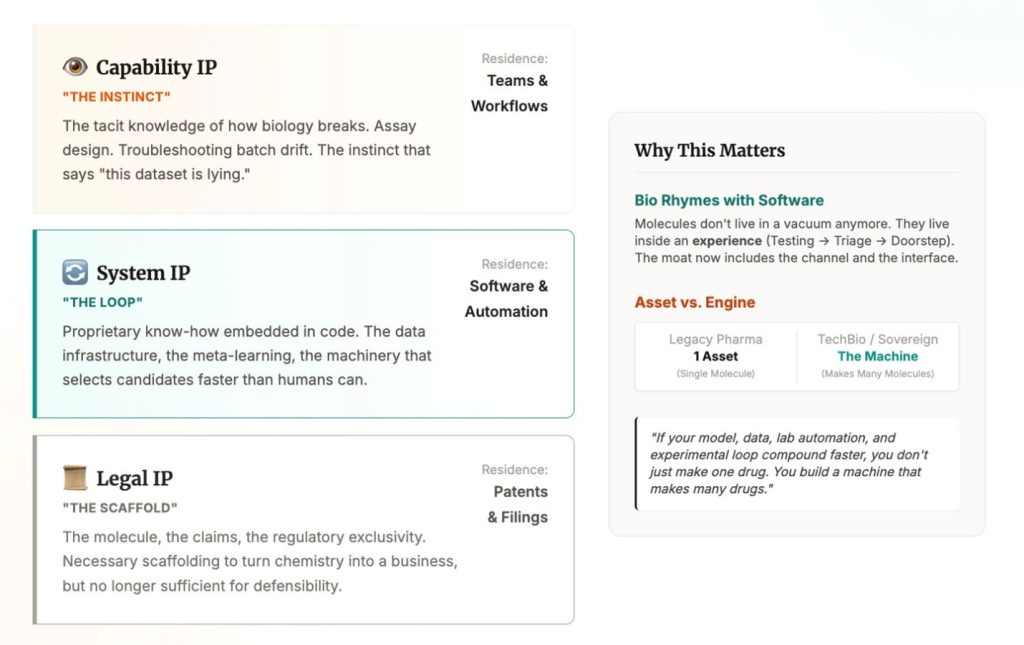

Pharma feels like the exception, because here IP is oxygen. Without patents, there’s no pricing power, no exclusivity window, and capital stops showing up. True. Also incomplete. Because “IP” is no longer a single thing. It’s a stack.

At the bottom is legal IP: the molecule, the claims, the regulatory scaffolding that turns a chemical into a business.

Above that is system IP (or proprietary know-how): the data, the loop, the meta learning. The machinery that lets you generate, select, and improve candidates faster than anyone else.

Above that is capability IP: the tacit knowledge of how biology breaks in the real world. Assay design. Troubleshooting. Batch drift. The instinct that tells you “this dataset is lying” before the slide deck does. This doesn’t live in a patent. It lives in teams and workflows.

To be fair, pharma has always been a know-how business. What’s changing is that AI and robotics can scale that know-how: faster data creation becomes faster insight generation, which becomes faster iteration, which becomes enduring advantage.

This is where biotech starts to rhyme with software. As distribution shifts, defensibility shifts with it. Molecules don’t live in a vacuum anymore. They live inside an experience: symptoms, testing, triage, prescription, and a box on your doorstep. In that world, molecule IP is necessary but not sufficient. The moat starts to include the channel and the interface.

Add AI and the boundary blurs again. In AI x Bio, value is shared between the asset and the engine that produced it. If your model, data, lab automation, and experimental loop compound faster, you don’t just make one drug. You build a machine that makes many drugs.

The uncomfortable implication is that control may drift away from the molecule holder and toward whoever owns the interface. If a platform can interpret diagnostics, recommend next steps, and steer treatment decisions, it can become the choke point without inventing the drug. None of this is new. Formularies, guidelines, and default pathways have always shaped outcomes. What’s new is that software and AI-mediated triage can encode those defaults and scale them, turning interface control into a tighter choke point.

The uncomfortable truth is that the Western system can select winners without selecting for upstream process excellence. You can win by licensing the molecule, then outcompeting others on reimbursement strategy, access, marketing, and distribution. That is profitable in the short term, but it quietly degrades the one thing that compounds: the industrial capability to discover, test, and make the next generation of drugs.

But systems still run on messy human capability. Biology is not a clean API. The loop only compounds if you own the ugly middle: how experiments actually get done, how failures get debugged, how quality is enforced, how intuition forms.

That’s why outsourcing is more than a margin decision. When you outsource enough of the wet work, you export capability IP. And it’s almost invisible because it shows up as OpEx, not CapEx. It doesn’t trigger alarms. It just compounds, until the industrial base you rented becomes the one that out-iterates you.

Over the last two decades we built a miracle: rapid hypothesis generation, global testing pipelines, molecules into patients faster than ever. But under the miracle was a trade. We outsourced the compounding parts of biology, the work that makes IP real. The result is we didn’t just globalize execution. We underwrote rival bio industrial capacity. Now the bill is coming due.

So the argument isn’t “IP doesn’t matter.” It’s that IP expanded. Patents still anchor value, but defensible advantage increasingly lives in the loop (system IP), in the craft (capability IP), and in the interface (distribution). If you don’t own those layers, you end up with strong claims and weak control. And in an era of AI-mediated care, control is the thing that cashes the check.

You Can’t Reshore Biology Overnight

The BIOSECURE Act was an early signal that the policy world is waking up. The U.S. is moving to limit federal work with firms like WuXi, BGI, and MGI. The subtext is the real story. Biology is not just a supply chain anymore. It is infrastructure. And infrastructure has borders.

- The transition is going to sting, in very predictable ways.

- Cost shock. Western CROs cost more. Related but more important – they take more time.

- Capacity crunch. We do not have the throughput.

- Supply fragility. Even if we reshore labs, we still depend on imported precursors, reagents, and animal models.

We saw this in semiconductors. When the supply chain became a strategic liability, the response was not a better procurement spreadsheet, it was to rebuild domestic fabs.

In biology, we are walking into the same trap: fabless biology, assuming the factory can live offshore while the innovation stays at home. Workforces take longer to train. If you have not been paying for your own factory floor, you do not have one sitting idle just in case.

To be clear, late-stage clinical execution and regulatory work is still largely run by Western global CROs, as semiconductor design sits in the West and is only manufactured in the East. The vulnerability sits upstream, and it rhymes on the CDMO side too: China has scale in standardized, high-volume work, but the differentiated edge is fragmented and hard to rebuild once you stop paying for it.

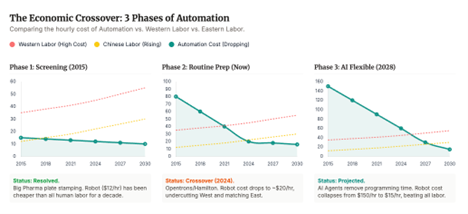

There is a reason Chinese providers dominate large parts of preclinical and early manufacturing throughput today. It is people. Highly skilled, deeply trained labor, scaled to industrial levels, at cost structures the West has not matched in decades. It is not primarily about cutting corners. It is about throughput.

But the ground may be shifting again. Robotics, automation and agentic AI workflows are advancing faster than most biotech boards are planning for. Work that used to be manual, linear, and low margin is becoming programmable, parallel, and scalable.

Lab automation is no longer a few pipetting arms. We are heading toward closed loop systems that can design experiments, optimize conditions, execute assays, interpret results, and propose the next hypothesis, over and over, without losing momentum.

If that trajectory holds, we will get a new kind of cloud lab. Not just shared infrastructure, but intelligent infrastructure. Agentic, increasingly autonomous, and easier to localize. The cost of spinning up a Western CRO could fall sharply. The value of doing AI augmented experimentation under your own roof could rise. And the moat moves again, toward the ability to iterate fast, reliably, and sovereignly, because in biology, the loop is the factory.

Own the Loop, Not Just the Molecule

Here’s what I hope the JPM talking points become. Not another round of macro agreement where everyone nods about sovereignty and geopolitics and then goes back to optimizing cost.

The real question for founders and investors is much sharper.

Where is your actual IP?

Is it in the molecule, or in the factory that can reliably make and improve it?

Is it in the deck, or in the loop that designed the hypothesis, tested it, debugged reality, and iterated until it worked?

Startups love the phrase asset light. Too often it is code for capability light. If you outsource the work that teaches you, you are not building a company. You are running a spreadsheet with a lab receipt attached.

For investors, this forces a reset in what counts as defensible. The molecule is only part of the story. The loop, the lab, the process intuition, those are strategic assets. If they live in China, you do not own them. If they live inside a system you control, you do.

The best companies in this cycle will not just own patents. They will own the learning curve. As we approach an AI-centric world, the “10 years to clinic” metric collapses. The cycle of Design → Make → Test → Learn shrinks from months to days.

The “process IP” we talked about is no longer human know-how; it is model weights.

So what do we do about it?

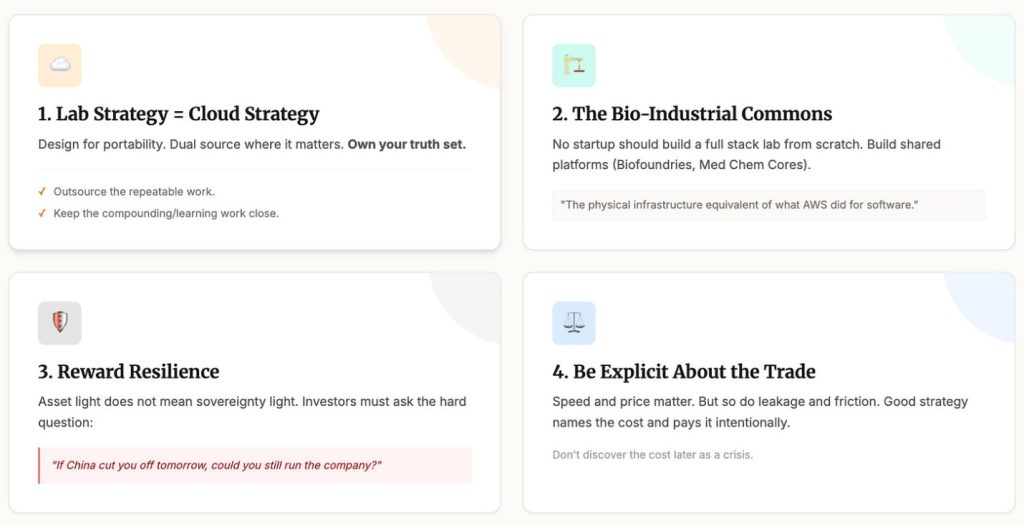

First, treat CRO strategy like cloud strategy. Design for portability. Dual source where it matters. Own your truth set. Keep local capacity for anything that teaches you. Outsource the repeatable work. Keep the compounding work close.

Second, invest in the bio industrial commons. No startup should build a full stack lab from scratch. But we can build shared platforms that many startups can plug into. Biofoundries. Medchem cores. Assay centers. It is the physical infrastructure equivalent of what cloud did for software.

Third, reward resilience in fundraising. Asset light does not have to mean sovereignty light. Investors should ask a simple question.

If China cut you off tomorrow, could you still run the company?

Fourth, be explicit about the trade. Speed matters. Price matters. But so do concentration risk, leakage, and geopolitical friction. Good strategy names the cost and pays it intentionally, instead of discovering it later as a crisis.

Because this was never just about cost.

Outsourcing helped finance a Chinese bio industrial base with the scale, capability, and learning velocity to become a competitor. WuXi alone targeting 100,000 litres of solid phase peptide reactor volume in 2025 was not just contract research. That’s sovereign capability.

The Apple and Tesla lesson was not ‘never build abroad’. It was simpler. If you build your business on top of someone else’s factory, do not be surprised when the factory becomes the business.

Biotech is there now.

It is time to own enough of the factory again.

Not everywhere. Not always. But enough to keep the loop tight, the learning local, and the innovation portable. Outsourcing should be a choice, not a dependency.

And then there is the final conclusion, which is not linear. It is orthogonal.

This is not only about plugging holes in the current system or rebuilding lost capacity. It is about asking the bigger question. What if the right answer is not ‘fix pharma’? What if the right answer is ‘build the first $3 trillion dollar biology company’?

The analogy is real. Google did not just crawl websites. It built infrastructure that made the internet usable. It sat between the user and the data. It turned distributed information into a loop. It monetized not the asset, but the search.

So what is the biological equivalent?

A system that closes the loop between data, models, wet lab execution, and patient outcomes. A sovereign engine. A full stack, AI native platform that does not just license molecules, but learns the entire process of making them better.

Not a CRO. Not a pharma company. Something new. Something foundational.

That is the orthogonal bet. Do not just fix the outsourcing mistake. Leapfrog it.

And that’s what I hope is talked about at the parties and dinners of JPM.

Editor’s Note: John Cassidy is a general partner with Kindred Capital in London. He is a member of the Timmerman Traverse for Damon Runyon Cancer Research Foundation team preparing to climb Kilimanjaro in February. A version of this article was first published on John’s SubStack.